Research Areas

I am broadly interested in robotics and autonomous systems. The long-term goal of my research is to make robots safe, yet "street-smart". By street-smart, I mean that the robot should be able to make quick and intelligent decisions in novel and complex situations based on what it sees. Yet, the robot should be able to reason about the consequences of its decisions on its own safety and that of other robots and humans around it.

In short term, my research focuses on understanding how machine learning can be used to achieve the above goals. Particularly, I focus on two aspects of robot learning:

(a) how can robots learn to make intelligent decisions in a data-efficient manner by combining learning with classical, model-based planning and control methods, especially when the robot relies on perception and vision sensors?

(b) how can robots maintain safety while using learning for decision-making?

1) Data-efficient task-based learning using models and optimal control

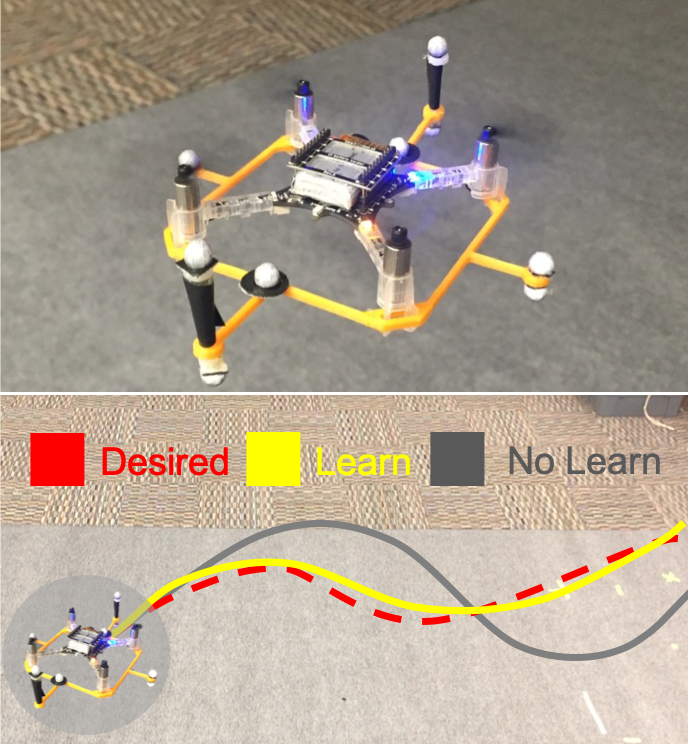

Autonomous systems will inevitably experience external effects in unstructured environments, which are often hard to model using first principles. I have developed learning frameworks that leverage methods from system identification (SysID) and optimal control to efficiently capture these effects during the controller design process.

These frameworks include both learning the residual dynamics of the system while using the already known system structure, as well as learning models of external effects that are specific to the control task at hand. The latter does not necessarily learn the most accurate dynamics model; instead, it identifies a “coarse” model that can be learned with a small amount of data, and yet yields the best closed-loop controller performance when provided to the optimal control method used.

Learning residual aerodynamic effects for an improved control performance.

aDOBO uses learning with optimal control to stir jelly in a given pattern.

Our presentation on aDOBO from the IEEE Conference on Decision and Control (CDC), 2017.

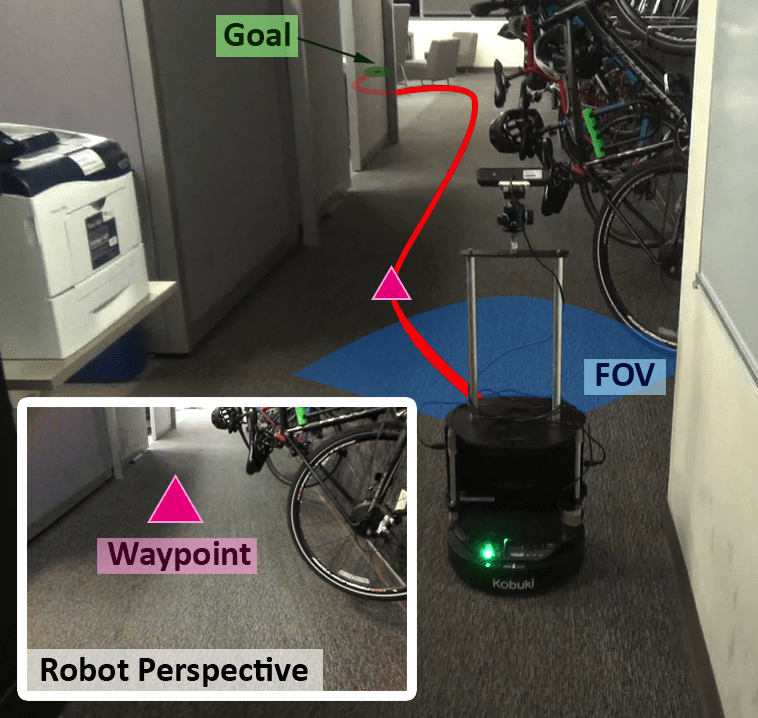

2) Data-efficient architectures for learning-based perception with model-based control

In many applications of interest, simple and well understood dynamics models are sufficient for control, and it is rather the vision and perception components that require learning. In this line of work, I focus on developing perception-action loops that efficiently combine deep learning-based perception with an underlying dynamics model for control, such as to navigate in a priori unknown environments. Leveraging underlying dynamics and feedback-based control not only accelerate learning, but also leads to trajectories that are robust to variations in physical properties and noise in actuation.

Learning-based perception with model-based control for data-efficient navigation in unseen environments.

A brief video explaining the key idea behind our framework for autonomous navigation in completely novel environments.

3) Advancing the theory of optimal control for scalable safety analysis of learning-enabled systems

In addition to improving data-efficiency, the models can also be used to design learning-based systems that are analyzable. In my work, I use model-based Hamilton-Jacobi-Isaacs (HJI) reachability analysis for safe learning and exploration. HJI analysis provides both the set of safe states and the corresponding safe controller for general nonlinear system dynamics. Since all system constraints are satisfied within this set, learning can be performed safely inside it.

The main challenge is to scale HJI analysis to real-world autonomous systems because of its exponential computational complexity with respect to the number of state variables. In my work, I address this challenge on multiple fronts by leveraging (a) the structure in dynamics and control strategy, (b) offline computations, and (c) modern computational tools to perform this analysis tractably.

A 50-UAV reachability analysis takes less than 2 minutes with the CUDA implementation in BEACLS compared to 2.8 hours with the existing implementations.

Provably safe trajectory planning for large-scale multiple UAV systems using Hamilton-Jacobi reachability for various vehicle densities and wind speeds.

4) Safety assurance for learning-enabled systems in unknown and human-centric environments

As the autonomous system is operating in its environment, it may experience changes in system dynamics or external disturbances, or it may evolve via learning-in-the-loop. Consequently, safety assurances need to be evaluated and updated at operation-time. In this line of work, I build upon the developed tools in 3) to construct safety envelopes around learning-based perception and human motion prediction components, allowing robust motion plans to avoid collisions with obstacles and humans.

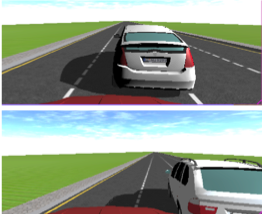

Hamilton-Jacobi reachability-based framework for computing a safety envelope around the learning-based perception component.

Combining model-based and sampling-based methods for the safety analysis of a lane-keeping perception module.

Hamilton-Jacobi reachability analysis for finding all possible changes in the data-driven human prediction model for safe robot planning.